What if the issue with AI isn’t that it’s out to take our jobs but instead distort our reality? Despite the merits of AI, some organisations are reluctant to implement AI as the risk of data loss outweighs the potential benefits. What if the real risk of AI is due to improper design, implementation and maintenance? Many organisations implement new systems with pace in a “set it and forget it” manner, AI systems being no different, security as an afterthought taken seriously post-breach.

Without architecting clear trust boundaries and controls, AI becomes a significant threat vector for stealing sensitive data, personally identifiable information (PII) and intellectual property (IP), in addition to manipulating the knowledge base of AI systems. Before deploying such an influential tool, it is in public interest to architect a robust solution for architecting clear trust boundaries and data controls to mitigate risks related to data loss and uphold trust within Enterprise AI.

This article will discuss enterprise AI systems, outlining the risks, how to maintain AI systems deployed within enterprises, and why secure AI architecture and engineering decisions must be made ahead of going live.

Definitions & Concepts

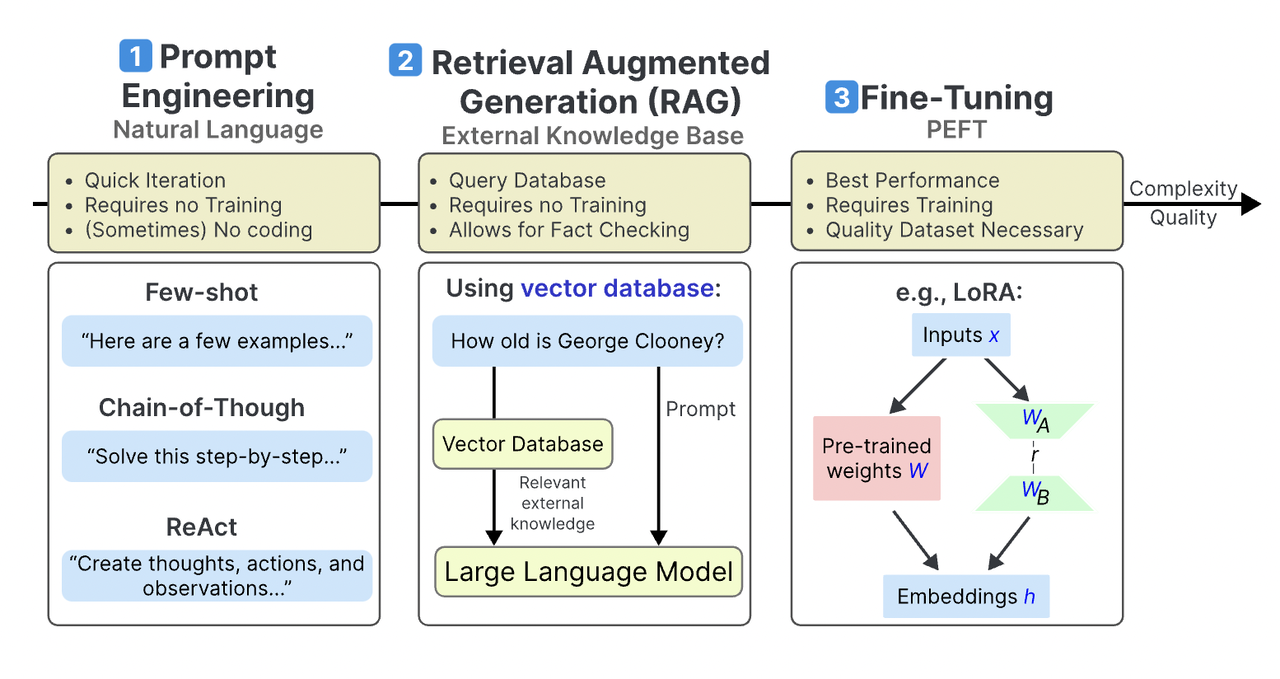

- Large Language Model (LLM) - a machine learning model that can comprehend and generate human language, trained on large datasets (Cloudflare).

- AI system - a machine-based system that is designed to operate with varying levels of autonomy and that may exhibit adaptiveness after deployment (EU Artificial Intelligence Act).

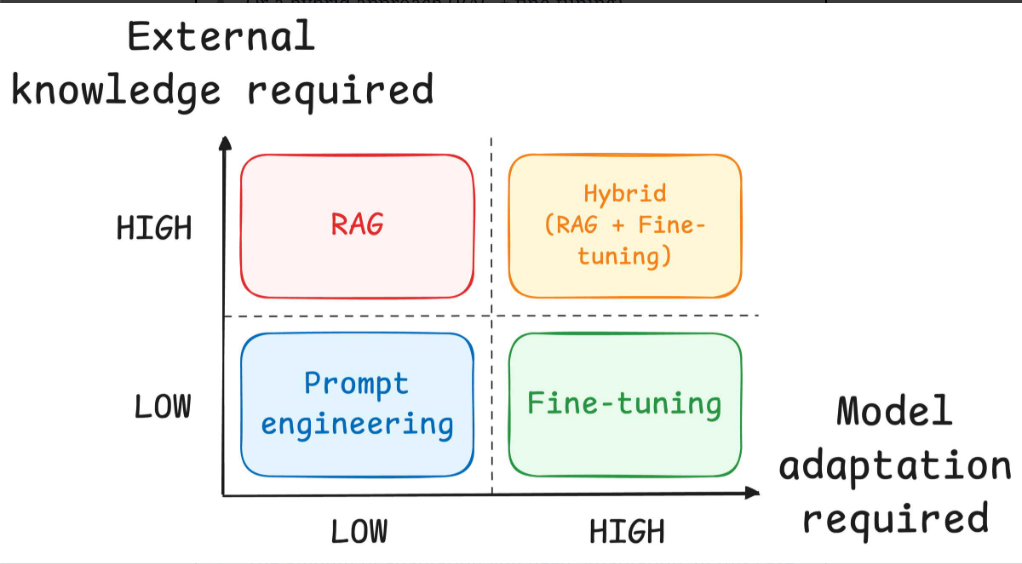

- Retrieval Augmented Generation (RAG) - the process of optimising the output of a large language model, so it references an authoritative knowledge base outside of its training data sources before generating a response (AWS).

- Fine Tuning - the further training of a pre-trained LLM on a task-specific dataset (Google).

- Prompt Engineering - the practice of designing inputs for AI tools that will produce optimal outputs (McKinsey).

- Architecture-First Approach - before implementation begins, software architectural design must be completed so the solution meets technical and operational requirements (Kibru et al, 2020).

- Trust Boundary - a logical construct used to demarcate areas of the system with different levels of trust (Microsoft).

What Enterprise AI Security Architecture Means

Enterprise AI security architecture is the structured design of controls around models, prompts, data flows, retrieval layers, user permissions, system integrations, and monitoring. In practice, secure enterprise AI means deciding what data the model can access, what systems it can influence, who can use it, how it is governed, and what happens when outputs are wrong, unsafe, or manipulated.

A strong enterprise AI security architecture should define trust boundaries, role-based access, logging, approval logic, retrieval controls, and rules for how model outputs are consumed by downstream systems. This is what turns AI from an experimental tool into a secure enterprise capability.

On-page relevance note: If someone searches for enterprise AI security architecture or secure AI system architecture, this page should answer the question directly within the first screen and again in the first supporting section.

How Enterprise AI Systems Work

AI remains a growing technology trend with individuals and organisations integrating AI systems into their day to day life. In 2024, Deloitte reported that 36% of the UK population had used Generative AI, while the use of AI at work also increased. The adoption of AI individually and by corporations makes trust in AI a matter of public safety.

At a high level, enterprise AI systems use data, prompts, models, retrieval pipelines, and business workflows to generate outputs such as recommendations, summaries, decisions, content, and actions. Data quality determines the success of the AI model outputting reliable, trustworthy information, and models must be monitored and updated to ensure accuracy over time.

Image Source: LinkedIn - Pavan Belagatti

Image Source: Daily Dose of DS - Avi Chawla

How Enterprises Should Architect AI Around Unstructured Content

Enterprises dealing with unstructured content such as documents, PDFs, policies, tickets, emails, contracts, knowledge articles, chat logs, and meeting notes should avoid sending everything directly into a model without control. Instead, they should architect AI around content classification, retrieval controls, data minimisation, access-aware indexing, and clear rules for which sources are authoritative.

A practical architecture for AI around unstructured content typically includes a governed knowledge layer, metadata tagging, permission-aware search, redaction or masking for sensitive content, and logging of who queried what. This matters because unstructured content often contains the very information enterprises are most worried about exposing: PII, contract details, internal strategy, credentials, and regulated data.

Secure enterprise AI for unstructured content should include:

- content inventory and classification before ingestion

- role-based access to knowledge sources

- retrieval filtering based on user entitlements

- sensitive document exclusion or masking rules

- approval gates for high-impact workflows

- monitoring for unusual query patterns or bulk extraction behaviour

The Practical Limits of Enterprise AI Security

In an ideal world, before any code is produced, you would invite all stakeholders including security to support you in building a secure application that is secure by design. However, in reality, a minimum viable product is often required quickly to understand demand before a larger investment is made.

Practical Steps: How to Fortify AI Implementations

- Define your AI workforce objective.

- Create an inventory of AI systems in use.

- Create an AI skills development roadmap for the workforce.

- Review integration of AI with existing workflows.

- Create a governance plan for AI systems.

- Create an AI security maturity roadmap.

- Create role-based permissions for AI uses.

- Review architecture, permissions, trust boundaries, and controls for AI systems.

Common AI Failure Points

| Decision Integrity Risk | Enterprise Compromise Risk |

|---|---|

| Prompt injection and unsafe model behaviour. | Sensitive data leakage and overexposed context. |

| Retrieval poisoning or knowledge base abuse. | Excessive agent privileges across business systems. |

| Model supply chain risk. | Insecure output handling and unsafe automation. |

| Monitoring and governance failure. | Insider misuse or policy bypass. |

Why Secure AI System Architecture Must Start with Design

The risk potential for AI systems is high, so architectural design choices must be made deliberately. Embedding security from the design phase enables trust boundary mapping, authentication checkpoints, data flow visibility, and identification of crown jewels. This makes secure AI architecture and engineering far more effective than retrofitting controls later.

What Successful AI Adoption Requires

Invest in AI skills

Organisations should include AI skills development as part of professional development and operational readiness, especially where teams will use AI in security-sensitive workflows.

Adapt workflows to include AI

The successful implementation of AI hinges on complete integration within existing workflows rather than standalone deployments.

| Standalone AI | Integrated AI |

|---|---|

| Uses generic context | Uses ticket, asset, user, and SLA context |

| No built-in approval logic | Works within routing and approval rules |

| Hard to audit | Logged in the workflow |

| Optional and inconsistent | Standardised and repeatable |

| Limited measurable value | Easier to tie to workflow KPIs |